Interrogating the brain on trial: neuroscience, ethics and the law

This is the first in an ongoing series by Center for Medical Ethics and Health Policy summer interns, undergraduate/graduate students interested in emerging ethical issues.

An assassination attempt. Jodie Foster. A brain scan. What do all these things have in common? John Hinckley, Jr.

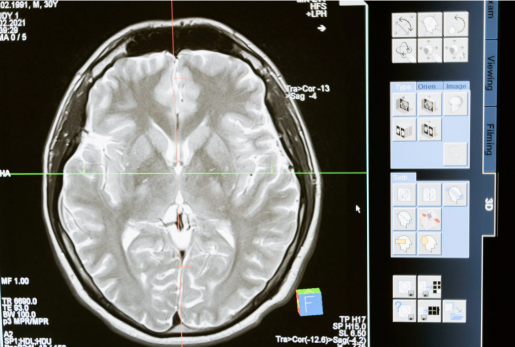

Hinckley was brought to trial for the attempted assassination of former President Ronald Reagan in 1982, prompted by Hinkley’s obsession with Jodie Foster. At his trial, the jury was presented with CAT scans of his brain. Dr. Marjorie LeMay, a neuroradiologist, testified that the images showed Hinckley’s brain was “shrunken” and exhibited “organic brain disease.” Dr. LeMay’s testimony did not address how any abnormalities correlated to Hinckley’s capacities, such as intentional actions and responsibility. However, based on her testimony, the jury decided the evidence was convincing enough to deem Hinckley not guilty by reason of insanity and sentenced him to institutional psychiatric care.

Responsibility is not a straightforward concept, as its meaning can differ considerably in different contexts. In law, responsibility refers to whether an individual committed a crime. Criminal punishment, however, is also dependent on intentionality of an action. Intentionality is measured in degrees; for instance, degree of intentional action is reflected in the difference between manslaughter (unintentional harm leading to loss of life) and murder (intention to cause loss of life).

In ethics, on the other hand, responsibility is viewed in terms of moral actions: How blameworthy or praiseworthy is an individual for an action in question? Intentionality is also a complex, contested area of philosophy, where it becomes increasingly murky trying to establish a connection between an individual’s “intentional” thoughts and actions.

It is also important to note that, historically, it has been incredibly difficult to prove someone “not guilty by reason of insanity” (often shortened to NGRI). The diagnosis of a mental illness is not sufficient in and of itself for absolving responsibility for a crime in a court of law (nor, in my opinion, should it be).

Since a successful defense of NGRI requires more than a diagnosis of mental illness (such as measuring degrees of capacity, ability to appreciate the difference between right and wrong, and so on), submitting an NGRI defense is used in less than 1% of criminal cases.

Moreover, blaming characteristics of the brain for behavior is not a new phenomenon. In 1834, a 9-year-old boy was brought to trial in Maine for “maiming” and “attempting to maim” an 8-year-old boy. The defense called upon the then-acceptable practice of phrenology, wherein the bumps on an individual’s skull were examined and correlated with particular character traits.

The 9-year-old boy’s lawyer sought leniency, given that the boy had received a head injury when he was younger, resulting in “damage to the Organ of Destructiveness,” which had caused him to be more aggressive and destructive. While the judge ended up throwing out this evidence (and phrenology was eventually debunked and scrapped as a practice), this case marked a significant shift toward trying to explain socially unacceptable behaviors by different characteristics of the brain.

Advancements in technology could suggest that Hinckley’s defense in the 20th century was better supported than approaches taken in the 19th century. There are common themes, however, to consider between Hinckley’s case and the phrenology defense.

Today, many neuroscientists believe it is doubtful that any kind of brain imaging will provide insight into a defendant’s motivations and intentional states at the time of a crime. Moreover, neuroscientists are hesitant to make claims about correlations between brain activity and intentional behavior that translate to the legal system, claims about responsibility and free will. These details are what matter in courts of law. They are also in direct contrast to the jury’s decision in Hinckley’s case.

Experts are hesitant about neuroscientific data being used in this way, but it is still being used in court. Attorneys have pulled in data and expert testimony as admissible evidence at exponential rates; in 2013, the toll was over 1,000 cases, and is continuing to rise—so much so the exact number at present is unknown. This is chiefly occurring in cases regarding defendants’ diminished capacities, competencies, and supposed “insanity.” The application of neuro-data in this way not only affects legal decisions, but it also can impact public understanding of what neuroscience data can actually tell us, leading to misconceptions.

One study from Wardlaw et al. (2011) showed that not only do expert neuroscientists grossly underestimate how much neuroscience is being used in court, but a majority of the public also thought that neuroimaging could tell us much more than it could either then or at present, such as the ability to track lying and deception.

This speaks to a greater problem, too: Lawyers, legal scholars, scientists, physicians and ethicists often utilize theoretical concepts that share the same name but do not correlate one another definitionally or realistically. Legal concepts of responsibility cannot directly map onto concepts about intentional action measured in a lab, for example.

If anything, this mismatch demonstrates the increasing urgency to share research data publicly and among investigators, not only to get stakeholders from different domains on the same page, but also to emphasize the importance of contextualizing neural data.

It is evident that there are gaps between what is going on in the scientific world, the legal world and the world of public opinion. There needs to be better education on the limits of neuroscientific data, as well as further interdisciplinary work towards a consensus on the meanings and implications of concepts such as responsibility and intentionality.

By Kathryn Petrozzo, summer intern, Center for Medical Ethics and Health Policy, Ph.D. candidate at the University of Utah