No bioinformatics experience required to use new CRISPR data analytical tool

It’s a new, more sensitive and accurate, quicker and more user friendly way to model CRISPR data. Developed in the lab of Dr. Zhandong Liu, the new computational tool has been designed to make the life of bench scientists easier when it comes to identifying genes that are involved in particular diseases, an arduous and time consuming process.

“This project arose from a collaboration with the lab of Dr. Huda Zoghbi, which studies neurological diseases. One of the lab’s goals is to identify modifier genes that can be targeted with drugs,” said Liu, associate professor of pediatrics and neurology at Baylor College of Medicine and director of the Bioinformatics Core in the Jan and Dan Duncan Neurological Research Institute at Texas Children’s Hospital.

Modifier genes are genes that affect the expression of other genes. The idea behind identifying modifier genes is to attempt to treat a disease, not by directly affecting the gene that actually causes it, but through specific modifier genes that can reduce the negative effects of the gene causing the disease.

To identify candidates of modifier genes, the Zoghbi lab uses CRISPR technology to screen the entire genome.

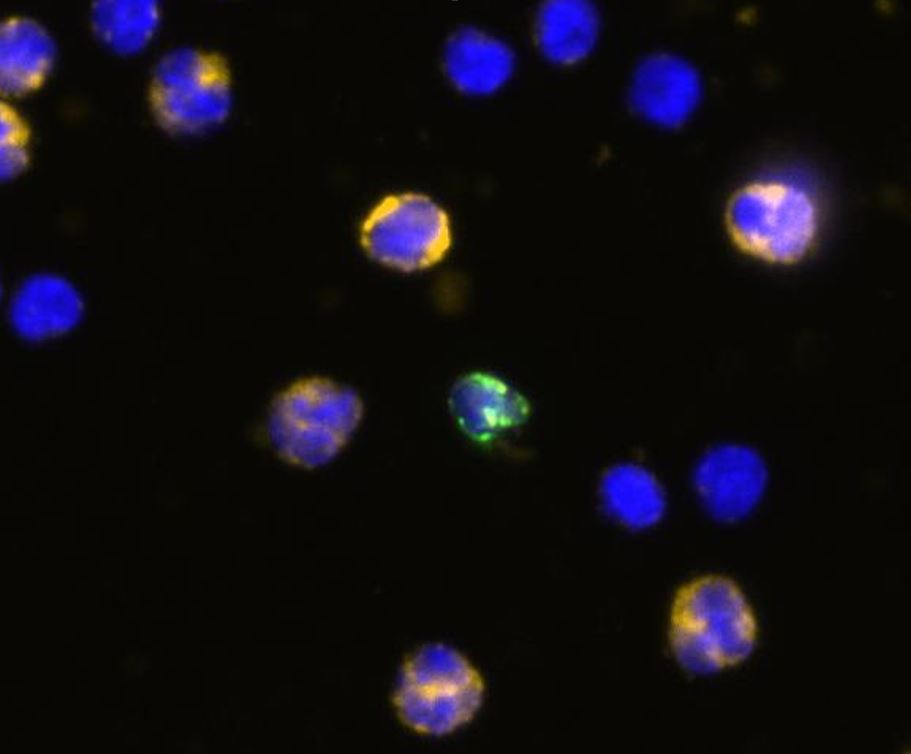

“CRISPR screens generate data sets of many thousands of genes, so the researchers needed a tool to narrow the list to a number of candidates that would be practical to test in the lab,” Liu said. “Although there are several computational methods available, to analyze CRISPR screen data accurately it is important to use analytical tools that model the natural characteristics of the data as precisely as possible. We thought that the available computational tools did not fulfill these criteria.”

“CRISPR data usually come with a lot of variability that can make data interpretation difficult,” said first author Dr. Hyun-Hwan Jeong, a postdoctoral associate in the Liu lab. “We developed a new method that takes into consideration the variability of CRISPR screen data; it can better capture both large and small variations in the data.”

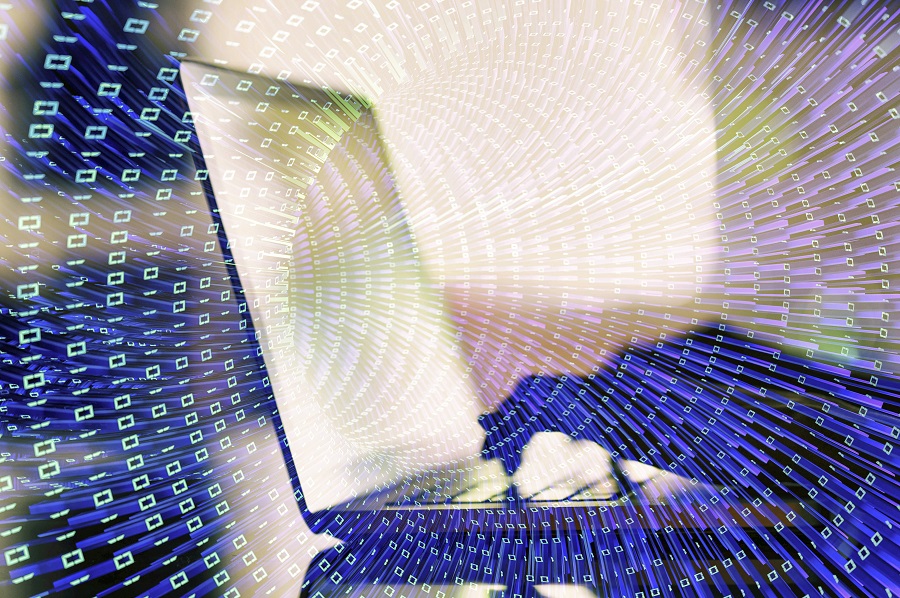

When compared with the eight most commonly used methods, our web tool is more sensitive and accurate, quicker and more user friendly.”

The new computational tool, which uses beta-binomial modeling, enables lab scientists to better estimate the statistical significance of each gene that they measure. They also can generate a list of genes that are likely candidate targets for therapy, including as many candidate genes as possible, but minimizing false positives.

“What is really exciting about this computational tool is that researchers are not restricted to having a bioinformatics or computational biologist help them analyze their ‘big data,’” said Zoghbi, who is professor of molecular and human genetics and of pediatrics and neuroscience at Baylor and director of the Jan and Dan Duncan Neurological Research Institute at Texas Children’s Hospital. She also is an investigator at the Howard Hughes Medical Institute. “This tool empowers the wet lab scientist who is not trained in bioinformatics to analyze data to identify the best gene candidates.”

To read all the details of this work, visit the journal Genome Research.

Seon Young Kim and Maxime W. C. Rousseaux also contributed to this work. The authors are affiliated with one or more of the following institutions: Baylor College of Medicine, Texas Children’s Hospital and Howard Hughes Medical Institute.

This work was supported by National Institute of General Medical Sciences R01-GM120033, National Science Foundation – Division of Mathematical Sciences DMS-1263932, Cancer Prevention Research Institute of Texas RP170387, Houston Endowment and Chao Family Foundation. Support also was provided by Huffington Foundation, Howard Hughes Medical Institute and the Parkinson’s Foundation Stanley Fahn Junior Faculty Award PF-JFA-1762.